Reviewing NEE’s 2020 Recertification Training

As part of our continuous improvement cycle at NEE, we look at data from previous years training to inform decisions about future training. We have completed an initial analysis of the Summer 2020 NEE recertification trainings, and I want to break down what we’ve learned and what it means moving forward.

NEE recertification trainings are annually recurring sessions that are required for each evaluating administrator in a member district. Evaluation skills are not educator skills, especially when done correctly. The annual training process is a chance to re-introduce and immerse each evaluator into the psychometric process and frame of mind to provide accurate observations of effective teaching practices.

As with most things in 2020, NEE training was not typical this past summer as we were not able to offer an in-person training option. Instead, NEE offered two types of online training: a synchronous version conducted via Zoom and a pilot on-demand process that was available to select participants.

Let’s first dig into what we learned from the training evaluations that came back from those processes.

What Went Well

Both recertification platforms we used this year – through Zoom and on-demand – received very similar feedback. Most encouragingly, over 97% of participants enjoyed the trainings and felt the trainings fit their needs, learning goals, and time capacities.

Participants in both sessions also commented on the “frame of reference” video process to help recalibrate to the language of each observation rubric. This is our second year of the frame of reference process, and we agree – it does seem to provide more details and definitions for what each specific teaching practice should look like at varying levels of effectiveness.

As we advance, we look forward to continuing the frame of reference process and expanding it to be more inclusive of classroom types, including a wider variety of content areas, career-tech classrooms, and early childhood classrooms.

Under Consideration

The online recertification process also significantly changed one aspect of a typical in-person training: peer-to-peer discussion. Participants in both types of online trainings suggested that the chance to connect and talk through effective teaching practices was missed. Zoom participants indicated there was the chance for some discussion and enjoyed the variety of discussion options: polling, chat, and voice. On-demand, of course, was much more individualized and isolated, without a chance to discuss. While the lack of discussion did not phase all participants in the on-demand pilot, it was noted.

That feedback helps inform us as we think about the platforms we utilize moving forward. We know there is a want for discussion-based trainings and also a want for more individualized options. Our team will continue to dedicate thought and efforts into providing as much adaptability and choice as we can in how we deliver training.

Exam Data

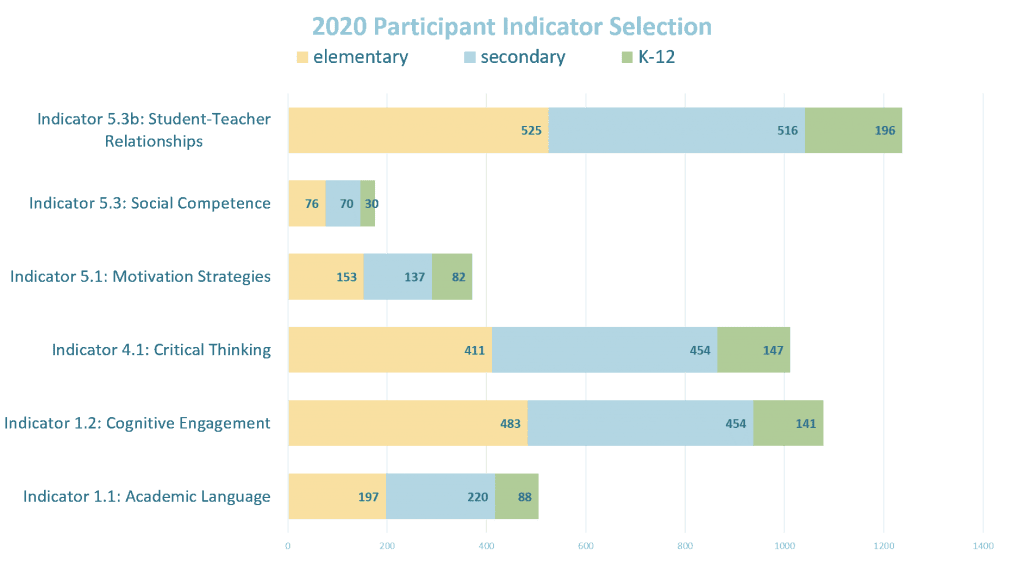

While we look at training evaluations, we also are informed by the annual exam each participant takes to conclude their recertification training. Our big goal from training is to build longitudinal precision and accuracy for each individual evaluator and across the network. For the past few years, we have been able to offer flexibility within the qualifying exam to fit most, but not all, daily environments our network members experience. This includes providing flexibility in which indicators are included within the exam.

New this year, we also provided flexibility based on the grade levels an evaluator wanted to be assessed on; evaluators could choose K-5 classrooms, 6-12 classrooms, or a combination of the two. 617 evaluators selected the secondary exam, 615 selected the elementary exam, and 228 selected the combination exam.

In 2020, like every year, all 1460 participants evaluated on Indicator 7.4 (monitoring the effect of instruction on individuals and the whole class). Along with that indicator, participants chose three other indicators to develop their exam. The most commonly selected indicator was Indicator 5.3b (student-teacher relationships), with 1237 participants selecting that effective teaching practice. After that, Indicator 1.2 (cognitive engagement) and Indicator 4.1 (critical thinking and problem-solving) were both selected over 1000 times.

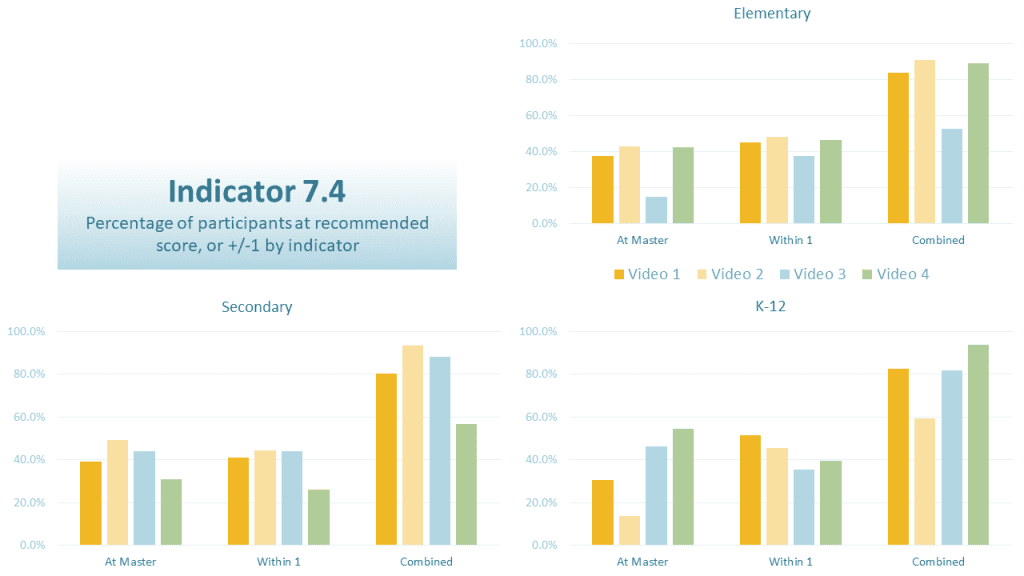

A Closer Look at Indicator 7.4

The graph above shows the percentage of participants who scored at or within 1 point of our recommended, or master, score on Indicator 7.4. The bars on the graph represent each of the four videos that participants scored. Encouraging news is that for each of the groups, 80% of participants scored at or within 1 point of the recommended score on 3 of the 4 videos. With that being said, we would still like to see a higher percentage of scores at the master level, and less at the +1/-1 level.

Without showing every piece of data – the frequencies, the graphs, etc. – I did want to share this as it provides some insight into what we continue to try to work on: precision and accuracy. In the name of precision, we would like to see a higher percentage at the exact level as our recommended master score. On the accuracy side, while 80% on 3 of the 4 videos is good, we would like to see that improve as well.

This does give us plenty of further data to analyze and to work through, and we are doing that – not only for 2020 but over time. By Summer 2021, we hope to have some insight from that longitudinal data that gives us more understanding of the intricacies of observing effective teaching practices in a classroom environment.

And that gets me back to one of my earlier points: training. Every year, we receive some evaluation comments that trend towards the idea that the annual requirement is unneeded.

Unfortunately, we are not at that point yet. We do not yet have the data to support a move away from annual training, and that’s why we keep working at this together.

Starting next week, we will convene our annual master scoring process. This brings together administrators from across the network who have scored at 87.5% accuracy or above on two of the previous three annual exams. They will work together in small groups to score and provide commentary on 24 more classroom videos. Those 24 videos will be used in a variety of ways. Some will become part of the annual exams, some will move into the EdHub Library, and some will stay in our internal library awaiting further analysis and understanding. Master scoring is an integral part of our continuous improvement cycle. Thank you to each administrator who has been and will be a part of that master scoring process over the coming months. You have each helped us become better in the training we offer and the resources we make available to our network of schools.

What It All Means

At the same time as the master scoring process is going on, the NEE member services team will be developing training processes and content for Summer 2021. We will continue to provide training on multiple platforms. We will also continue to develop training in a way that works toward accuracy and precision for each network member and the whole network. Together we will continue to improve our understanding through research and analysis, which will improve the way we deliver and support the implementation of effective observations.

We also have a goal of increasing our video library to include more early childhood classrooms, more career-technical education classrooms, and a wider variety of content areas. That goal hit a snag this year as we don’t have the same access to classrooms as normal. However, as we move forward, we will continue to seek out administrators, schools, and teachers that would be willing to let us into their building to record the teaching that occurs every day across the network.

As you want to learn more, please feel free to email me at hairstontw@missouri.edu with questions you may have.

Tom Hairston is the Managing Director of the Network for Educator Effectiveness. Tom has worked with NEE since 2011. Prior to his work with NEE, he worked for two years as a Positive Behavioral Interventions & Supports Consultant for the Heart of Missouri Regional Professional Development Center at the University of Missouri. He began his career in education as a high school special education and language arts teacher and football coach at Moberly High School in Moberly, Mo. Tom received his PhD in Educational Leadership and Policy Analysis from the University of Missouri in 2012.

The Network for Educator Effectiveness (NEE) is a simple yet powerful comprehensive system for educator evaluation that helps educators grow, students learn, and schools improve. Developed by preK-12 practitioners and experts at the University of Missouri, NEE brings together classroom observation, student feedback, teacher curriculum planning, and professional development as measures of effectiveness in a secure online portal designed to promote educator growth and development.